Saliency in VR: How do People Explore Virtual Environments?

Using eyetracking data to explore visual saliency in VR

Vincent Sitzmann*, Ana Serrano Pacheu*, Amy Pavel, Maneesh Agrawala, Diego Gutierrez, Belen Masia, Gordon Wetzstein

Understanding how people explore immersive virtual environments is crucial for many applications, such as designing virtual reality (VR) content, developing new compression algorithms, or learning computational models of saliency or visual attention.

Whereas a body of recent work has focused on modeling saliency in desktop viewing conditions, VR is very different from these conditions in that viewing behavior is governed by stereoscopic vision and by the complex interaction of head orientation, gaze, and other kinematic constraints.

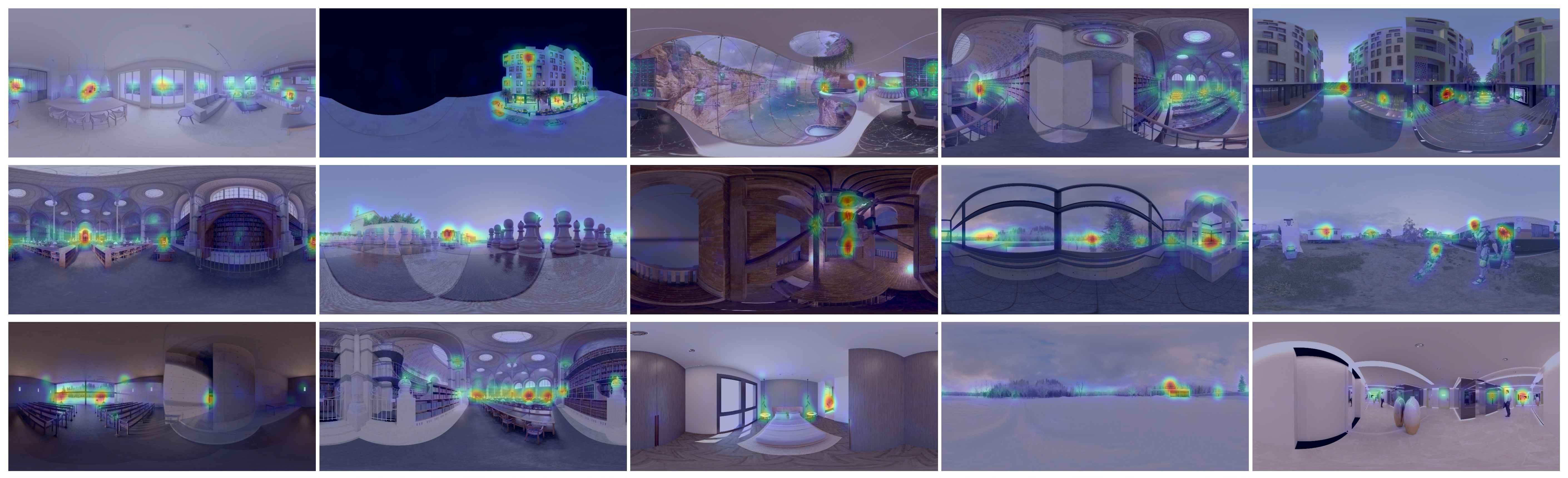

To further our understanding of viewing behavior and saliency in VR, we capture and analyze gaze and head orientation data of 169 users exploring stereoscopic, static omni-directional panoramas, for a total of 1980 head and gaze trajectories for three different viewing conditions. We provide a thorough analysis of our data, which leads to several important insights, such as the existence of a particular fixation bias, which we then use to adapt existing saliency predictors to immersive VR conditions. In addition, we explore other applications of our data and analysis, including automatic alignment of VR video cuts, panorama thumbnails, panorama video synopsis, and saliency-based compression.

Submission Video

Scanpath Samples

Related work

Check out our related work on how people explore virtual reality scenes:

Movie Editing and Cognitive Event Segmentation in Virtual Reality Video

Bibtex

References

For full details, please refer to our paper.

Send feedback and questions to Vincent Sitzmann